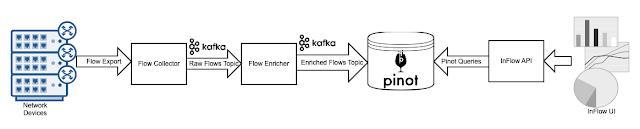

Real-time analytics on network flow data with Apache Pinot describes LinkedIn's flow ingestion and analytics pipeline for sFlow and IPFIX exports from network devices. The solution uses Apache Kafka message queues to connect LinkedIn's InFlow flow analyzer with the Apache Pinot datastore to support low latency queries. The article describes the scale of the monitoring system, InFlow receives 50k flows per second from over 100 different network devices on the LinkedIn backbone and edge devices and states InFlow requires storage of tens of TBs of data with a retention of 30 days. The article concludes, Following the successful onboarding of flow data to a real-time table on Pinot, freshness of data improved from 15 mins to 1 minute and query latencies were reduced by as much as 95%. The sFlow-RT real-time analytics engine provides a faster, simpler, more scaleable, alternative for flow monitoring. sFlow-RT radically simplifies the measurement pipeline, combining flow collection, enrichment, and analytics in a single programmable stage. Removing pipeline stages improves data freshness — flow measurements represent an up to the second view of traffic flowing through the monitored network devices. The improvement from minute to sub-second data freshness enhances automation use cases such as automated DDoS mitigation, load balancing, and auto-scaling (see Delay and stability).

An essential step in improving data freshness is eliminating IPFIX and migrating to an sFlow only network monitoring system. Rapidly detecting large flows, sFlow vs. NetFlow/IPFIX describes how the on-device flow cache component of IPFIX/NetFlow measurements adds an additional stage (and additional latency, anywhere from 1 to 30 minutes) to the flow analytics pipeline. On the other hand, sFlow is stateless, eliminates the flow cache, and guarantees sub-second freshness. Moving from IPFIX/NetFlow is straightforward as most of the leading router vendors now include sFlow support, see Real-time flow telemetry for routers. In addition, sFlow is widely supported in switches, making it possible to efficiently monitor every device in the data center.

Removing pipeline stages increases scaleability and reduces the cost of the solution. A single instance of sFlow-RT can monitor 10's of thousands of network devices and over a million flows per second, replacing large numbers of scale-out pipeline instances. Removing the need for distributed message queues improves resilience by decoupling the flow monitoring system from the network so that it can reliably deliver the visibility needed to manage network congestion "meltdown" events — see Who monitors the monitoring systems?

Introducing a programmable flow analytics stage before storing the data significantly reduces storage requirements (and improves query latency) since only metrics of interest will be computed and stored (see Flow metrics with Prometheus and Grafana).

The LinkedIn paper mentions that they are developing an eBPF Skyfall agent for monitoring hosts. The open source Host sFlow agent extends sFlow to hosts and can be deployed at scale today, see Real-time Kubernetes cluster monitoring example.

docker run -p 8008:8008 -p 6343:6343/udp sflow/prometheusTrying out sFlow-RT is easy. For example, run the above command to start the analyzer using the pre-built sflow/prometheus Docker image. Configure sFlow agents to stream telemetry to the analyzer and access the through the web interface on port 8008 (see Getting Started). The default settings should work for most small to moderately sized networks. See Tuning Performance for tips on optimizing performance for larger sites.

No comments:

Post a Comment